Hi. I’m Tom Bridge. For 20 years, I worked in IT. First for an education non-profit, then for a consulting company, where I handled Apple-facing IT for small and medium enterprises. Our biggest customer had thousands of people and dozens and dozens of interrelated system. During that time, we developed software to support their businesses, as well integrated with half a dozen different MDMs and IDPs of different varieties.

Now, I’m the Director of Product for Device Management at JumpCloud. We make remote work happen at JumpCloud, and we do it through identity management and device management. I work with our engineering teams day in and day out.

I’m here to talk about a subject I may know a little bit about: running after the ice cream truck.

There’s this thing in the States – and I’m hopeful it’s here too – and you’re sitting in the back yard on a hot summer day when you hear it. It’s a jingling of bells, or it’s a cheap MIDI recording of Turkey in the Straw, or it’s a special horn, but it’s the ice cream man, and you’ve got a couple bucks from your last trip to your grandma.

And so you tear into the house and up to your piggy bank, you grab that money, and sprint out front looking for that ice cream truck. That sound is almost innate. It triggers in you hope and joy, a treat that is unexpected, but one that you gotta hustle for.

This talk is part history, part self-help course, part commiseration, and part whiteboard session.

If you think about the pace of change – as I do all the time now, with a 9 year old at home – the amount of change that we have to deal with in just a year or two is the amount of change that used to take much longer to accomplish. I’m not sure if this is good or bad.

There have been a number of positive changes, as engineering teams have moved away from release cycles into continuous delivery, updating things behind the scenes, with or without documentation, notification, and training cycles. And of course, there have been just as many problems.

This talk isn’t here to argue that a faster cycle is better or worse, this talk is here to give us all a shared context, a clear understanding of the moment.

The last time I was here in Melbourne, my wife and I spent half a day at the Melbourne Museum, and we took in the history of the city. Melbourne is a lot like my home city of Sacramento in a number of ways. It has a vibrant pre-colonial history, and the Wurundjeri people whose land we’re on today are very much like the Nisanen people of the Central Valley. In addition, the city that was built by colonizers as part of the gold rush of 1851 mirrors the discovery of Gold in the Sierra Nevadas that lead to a rush on the fertile farmland at the foot of those mountains at the navigable river lands that founded Sacramento.

Gold Rush towns are special places, they know the cycles of boom and bust, and they have some of the most resilient people alive. Cycles like that of economic expansion and contraction can come with a great deal of change for areas, and it’s not always pleasant change for those who experience them. Sound a little familiar, I hope?

Gold Rushes are useful reminders that sometimes you have to act fact to get results, and sometimes you must be patient. With all good things – indeed all hard things – there are some criteria for success that must be planned for and met in order to win out. One of these is planning, and one of these is spontaneity.

This talk is all about the cycle of development that Apple has built across its lifespan, and how we deal with the constant tick tock.

History – From The System to Present

In the beginning, there was the System.

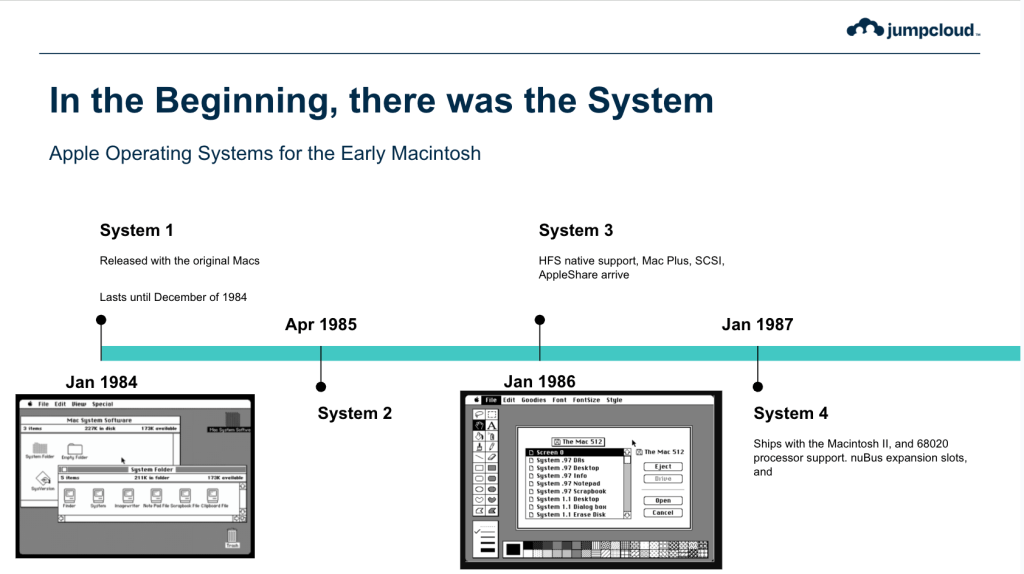

Early Macs ran on System Software, loaded from 400 kilobyte floppy disks, and run on 68000 series Motorola Processors.

System 1 was released with the original Macintosh in January of 1984.

It lasted all of 11 months before its final release in December of that year.

The Finder and the Menu Bar were the two killer apps, hallmarks of a graphical desktop. The Trash got emptied when the Finder was quit, and the whole thing filled 216kb of storage. Launch apps meant ejecting the System disk and inserting the application disk.

It was a magnificent time.

System 2 was released in March of 1985, bringing with it the ability to create Folders in the File System (welcoming HFS Plus for the first time), as well as Screenshots (with Command-Shift-3!), and the ability to select a printer via a brand new networking technology called AppleTalk.

System 3 was released in January of 1986 with the release of the Mac Plus with a 68020 processor, just 8 months after the release of System 2 with ground up support for HFS, SCSI and AppleShare. This was starting to look like a real operating system at this point. External hard disks were possible with the Mac Plus and could be attached to the original 512k Mac.

System 4 was released in January of 1987 with the release of the Mac SE, a year after System 3. System 4.1 in March with the Macintosh II, a full-size desktop with an external display, including a 13” model with a Sony Trinitron display. The Mac was now in color. Sorta. 16 colors was supported at 640×480, or 256 colors were supported at 512×384. Support for the 68020 processors, Apple’s first foray toward faster chips, was included.

All of these updates meant getting disks place to place, labels printed, shipped correctly and safely, as magnetic media were sensitive.

The first 4 Systems feel very much of a piece to me, delivering in each release a major chunk of functionality that was likely carved away from a complete product vision for the Macintosh down to the minimum viable product of the original Mac 128k.

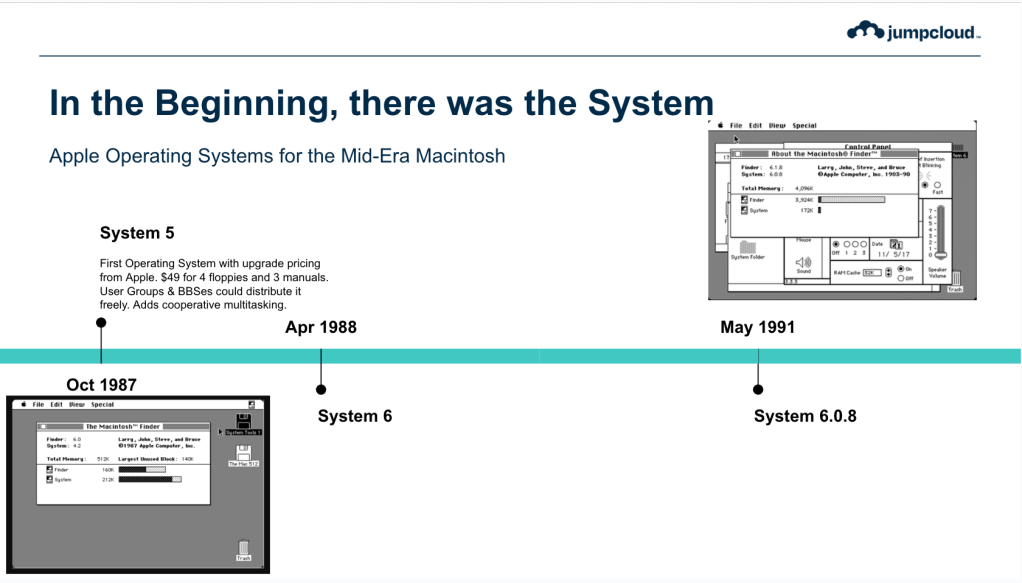

System 5 marked Apple’s fourth major upgrade cycle in under 4 years, and was the first of these upgrades to be paid for – $49 for 4 floppies and 3 manuals. System 5 (in reality Finder 4.2!) is released with cooperative multitasking, which is a little bit of a misnomer, but very workable for the day.

System 6 changed everything for Mac owners again, and ushered in a stability period for the Apple environment. Arriving in April of 1988, and supporting the SuperDrive and the release of the first 68030 Macs (the IIx and the SE/30), System 6 would be the first Apple release to go for a longer period of time. With maintenance releases lasting through March of 1992, and functional releases lasting through May of 1991, it’s Apple’s longest period without what could classify as a major update.

System 6 introduces Postscript for printing, as well as HFS support for volumes up to 2GB in size. Most importantly, the Achilles heel of the Mac has been identified: 24-bit memory support. Even though the Motorola processors they are using are 32-bit capable, an early design choice has limited the address space to just 3 bytes. RAM limits become a major frustration for developers and users alike.

It’s 1991, and 3 years have now passed since the release of System 6, and Mac users may have been wondering, after such a rapid pace of development, what could Apple possibly be thinking is up next? What have they been DOING down there at Infinite Loop?

After the release of System 6, Apple knew they had some choices to make. In a summit meeting in Pescadero, California, Apple engineers gathered to debate what had to happen next, and how quickly it could happen.

They started with Pink and Blue index cards – some additional Red and Green cards were there also, more on that in a bit – and the basic theme was: “Fix it in System 7” (Blue) and “Fix it in System 8” (Pink)

Blue cards were meant to be “quick wins” while Pink cards were meant to be longer solutions to thornier more theoretical problems. The Blue Team became know as the Blue Meanies, in tribute to the antagonists from Yellow Submarine, a 1968 animated psychedelic vision from the Beatles, and because the team was fairly fanatical about what represented a quick win versus what was going to be included in System 8 and beyond.

Architects are supposed to do that – they’re there to keep the narrative working, keep focus on the possible, and avoid PMs like me from mucking things up too bad.

So what did they put on one of the first blue cards?

32 bit memory management.

Other cards were added for 32-bit Quickdraw, Mandatory cooperative multitasking, Formalization of System Extensions from dirty hack to feature, Filesystem niceties, Drag & Drop, AppleScript, Pub/Sub, TrueType, and a Colorized UI throughout the system.

Getting 32-bit Clean

The original Mac Plus was the first Mac that could achieve the 4MB of RAM limit that 24-bit addressing could handle. The original 128KB Mac could only dream of such luxury. Between floppies that only held 400KB, and the expensiveness of RAM in 1984, the early Macs shipped with 24-bit memory addresses. Actually – they shipped with 22-bit memory addresses, but that wasn’t a big deal because there just wasn’t a worry about going over.

Though there was a 24-bit address limit for memory, the registers are still 32 bits wide, meaning that you could be “clever” and use things that were in the high byte of the register.

By 1988, most Macs are shipping with 8MB of RAM, but the operating system can’t really do much with more than that.

To learn more about this era, I went and talked a few devs from that era, including some still active today. Some have agreed to go on the record with their experiences, and others have wished to remain anonymous to prevent the sins of the past from spreading.

When I wanted to get a better handle on this, I asked Rich Siegel about the memory management systems on the early Mac:

So, the original 68000 had a 24-bit address space, which meant that the high-order 8 bits of any given pointer value were unused. Since resources were limited (the original Macs only had 128K of RAM and an 8MHz clock), it was typical to use that valuable 8 bits for other things.

A common application for that storage was metadata about the pointer itself; for example, the Mac memory manager had the concept of relocatable memory storage: you could ask the API to allocate memory, and it would give you back a pointer to a pointer (called a “handle”). The first-order pointer was the address of a pointer in a table which pointed to the actual storage (a “master pointer”).

If the OS needed to, it could relocate the memory that had been allocated, and after doing so, it would update the master pointer. Thus, the original handle returned to the client code never changed, even if the physical location of the allocated memory did.

In a 24-bit address space, the memory manager would use the high byte of the master pointer to store metadata about the allocated block: whether it was “locked” (relocation prevented), a “resource” (a specific type of data read from disk), “purgeable” (could be released to relieve memory pressure), and such like.

In a 32-bit world, that metadata now had to go somewhere else. The problem was that a lot of client code would examine and/or manipulate the master pointer flags directly, which broke; or would mask off the pointer values and use the resulting 24-bit address directly for calculations.

These things were often done in the name of performance and efficiency (and they were effective, since API calls on 68K could be quite expensive), but during the move from 24-bit to 32-bit addressing, every place that was done turned into a land mine.

(And that’s just the intersection with the Mac memory manager; it was quite common for highly optimized 68K assembly code that *didn’t* use the Mac memory manager to use the high byte of pointers to store metadata about whatever data structure the pointer represented, because it was a single mask operation.)

With System 7, Apple has declared that 32-bit clean is the way to be, and using the high byte of a given memory pointer to store metadata – or any data – was a great way to have your app crash or conflict, a lot of engineers had to make room for the transition.

Many developers were, in fact, making opportunistic use of available space without considering the future implications (or with a decision that the risk was worth it).

Apple also recognized that making 32-bit Clean the place to be, they still needed to support 24-bit memory addressing as a way to make sure that the organizations making code for the Mac platform could still operate while atoning for old bad behavior.

There was a checkbox added to the Memory Control Panel allowing you to pick which mode to run your Mac in.

Getting Clean meant atoning for old behaviors, even if those assumptions were good at the time.

TechNote 212 from Apple in late 1988 has one of the very best software engineering maxims I have ever heard:

“Don’t make any assumptions, even if those assumptions are currently true. The future will change.”

Andrew Shebanow, TechNote 212

Getting 32-bit clean was all about supporting your existing customers through the change. It was some time before older players did it, and many would call it the challenge of their careers to rearchitect their code.

But that wasn’t the only challenge Apple Developers and Admins faced.

PowerPC Transition

By the release of the 68040 (or ‘040) processors, Motorola and Apple were starting to struggle to keep up with the Intel world. Clock speed was king, and 40MHz in the ‘040 series was enough to keep the Mac humming, but it wasn’t clear if there was going to be a next generation that was faster/better/strong than these 32-bit chips.The 88000 series at Motorola were not as bulletproof and were a bit disaster prone.

The Motorola and Intel chips of the day were all based on CISC architecture – Complex Instruction Set Computing – designed to limit how much assembly you needed to write, and baking in complicated operations directly into the instruction sets that were called for usage. In 1970s, IBM began to experiment with processors that had fewer instruction sets, but could run them substantially faster.

You’ll notice we’re focused sheerly on the Blue Meanies at this point in time, but that doesn’t mean that the Pink Team at Apple were anything but busy. Pink was focused on building a very different kind of macOS. With a partnership with Motorola and IBM – the AIM Alliance – was hard at work looking at different futures for the Pink project.

One of the results here was the focus on the IBM Performance Optimized With Enhanced RISC program inside IBM. The PowerPC Chipset was Apple’s future, beginning with macOS 7.1.2 and the PowerMac 6100/60.

The challenge here was a wholesale change to the kind of instruction sets that ran in RISC as opposed to CISC conditions. Apple had a challenge here: how do you get people to rewrite everything? They evaluated options that would put both a PowerPC and 680×0 chip in the same box, but lost out on costing. An emulation layer, though, became possible, despite the performance hit.

Project Star Trek, and later Project Cognac, both provided major work for the porting of the Apple Operating System to PowerPC Chips. That lead to a solid emulation layer for apps that had not yet been ported to native code. Developers could ship fat binaries that contained executables for each processor family without much more than a recompile, and those who needed more could get by on the emulation layer on these substantially faster processors that ran at twice the clock speed of the existing 040 chips.

The transition was widely regarded as a huge success.

Pink and the Demise of System 8 (1994-1997)

We’d talked about Blue & Pink before. The Blue Meanies were gathering steam, and Pink was floundering. As the new system slipped further and further into the future on the heels of bloat and feature creep, engineers defected from the Pink team to actually ship their features to the active operating system.

Pink’s work around System 8 – called Copland internally – began to suffer from second system syndrome, and the delays began to pile up.

The Taligent company, a joint project with IBM to get Apple’s OS running on Intel chips, with a clear lack of end goals beyond the imaginary world of what’s possible, and a conflicting set of priorities around what had to ship, was eventually spun out into a company, which would later be absorbed by IBM. Infighting was legendary, goals weren’t aligned, and the users suffered for lack of a modern OS.

The Rise of Rhapsody (1997-2001)

At the very end of 1996, Apple spends $400M in cash they largely do not have to buy NeXT, founded by Steve Jobs after his 1985 ouster from Apple. They beat out another former Apple pair, Steve Sakoman and Jean-Louis Gassée of Be.

The OpenStep programming environment that Sun and NeXT co-developed was the future of Apple’s development, and the – forgive the pun – next generation operating system from NeXT gave Apple a modern future to dig out of.

Mac OS is still being developed, of course, and Mac OS 8 and 9 are released in the 1997 and 1999, but it’s clearly a dead OS walking given the presence of NeXT folks everywhere on the campus at Infinite Loop.

Sure, Mac OS 8.6 eventually introduces a nano kernel to handle fully pre-emptive multi-tasking the way Copland was intended to, but it’s far too late.

In the spring of 1997, Apple releases Rhapsody to developers. The name is a bit of a play on words. Mac OS 8 was supposed to be Copland, and the next version was supposed to be Gershwin. Gershwin is, of course, the composer of Rhapsody in Blue. The whole thing was supposed to be clever.

Developers see that on top of the new Mach 2.5 Kernel and UNIX 4.4 are two options: Yellow Box APIs for OPENSTEP, and a Mac OS Blue Box compatibility layer. If you want to develop for all the great new features of the Rhapsody environment – fully pre-emptive multitasking, incredible speed and power, everything folks were after Apple to build – you had to port ALL your code over to OPENSTEP and rewrite everything from scratch.

Sure, your old code would RUN in the Blue Box, but you were paying a pretty hefty price for doing so, in that you couldn’t talk directly with the hardware you were running with.

This did not fly with developers. Adobe and Microsoft famously pigeonholed Apple to tell them they wouldn’t port their entire apps to OPENSTEP, there had to be another way.

I can only imagine how fun it was to be in Developer Relations at the time.

With key players like Adobe and Microsoft unable to support Rhapsody entirely, Apple is faced with a predicament. Push forward without the core applications necessary to serve your customer base in your best operating posture? Or concentrate on a better path for your developers?

It’s a choice that Apple can’t say no to.

In the summer of 1998, Apple introduces the iMac, a new all-in-one Internet-forward Mac that abandons old standards in favor of new designs. This is the new Apple, lead by Steve Jobs again. Jobs announces Mac OS X at WWDC and a full transition strategy to replace “one of the only 2 high volume OSes in the world.”

Of Rhapsody, developers said “Nice Tech, didn’t give developers what they wanted.”

Apple introduces Carbon – a shared library for Mac OS 8 and Mac OS X. They said that most developers could make their apps ready for Carbon in 1-2 months, with initial Bring Up in 5 days or less, for the most part. Apple said that 90% of existing Mac OS Apps Code would work with Carbon.

They still had Yellow Box – now with a fancy new name we all recognize, Cocoa – and developers could still make the choice to fully port over everything to native frameworks instead of using the Carbon APIs.

When I talked to one developer about the divide between Carbon and Cocoa, though, the path was a lot less clear:

“It was a very grudging concession, because from the outset, the Mac OS X implementation of the Carbon API stack implemented the “punish people for not keeping up” mentality. Applications built using it were visibly and behaviorally different from AppKit applications. There may have been a valid technical reason why they did that, but I can’t imagine what it might have been. But it created a lot of problems with “API shaming”.”

Most organizations, though, were satisfied with the minimal rewrite required to support Carbon, and launched on Mac OS X in that environment.

Carbon code also served a huge advantage: the Carbon framework worked on Mac OS 8.1 as well as on Mac OS X.

The early days of Mac OS X are interesting for a lot of reasons. The first among them is overlap between OSes and their functions and operation. There’s a fair amount of trepidation with the release of Mac OS X in March of 2001 (about 18 months later than Steve had said in his 1998 introduction of Carbon).

From Mac OS 9 to Mac OS X (1999-2006)

Thanks to the work that Apple had done with Carbon, the transition from Mac OS 9 to Mac OS X was mostly pretty clean. You could build a Carbon app and have it work in both places while you could transition to Cocoa APIs over the next five years. But that didn’t work for every app, and Apple knew they wouldn’t get everything moved in time.

They announced Classic as a way to continue to run even the oldest of Mac OS apps on the new operating system. Classic was a whole second operating system inside the first, and allowed developers to skate a bit longer. Indeed, Classic ran on Power PC Macs all the way through Mac OS X 10.4.11, and gave developers just a little bit longer to complete a full transition.

In many ways, it could be a shame factor for developers to be in that box, though, and explaining what it would take to get your app running in that state was not insubstantial.

The hardest part had been accomplished by Rhapsody in getting developers across the line by convincing them that they needed to port to Carbon or Cocoa. Carbon was the easier path, but

From Power PC to Intel (2005)

Aware of the flaws of PowerPC’s development cycle – famously, the G5 could not be moved to portable Macs, and the 3GHz barrier was a hurdle the tower chips couldn’t crest – Apple began to talk to Intel about an agreement. By WWDC 2005, this had gotten to a head, IBM and Motorola had let them down for the last time, and Apple was going to move. Specifically cited was the challenges that they were having fabbing chips lower than 90nm, which is what prevented the 3GHz G5 from existing, a broken promise that hurt particularly badly with Intel already well past 4GHz.

At WWDC, Steve Jobs announced that every version of Mac OS X so far had already been running on Intel-powered devices since 2001 in a lab on the campus of Apple.

A pathway forward was set: if you were writing Java, or Scripts or anything like that, you had no work to do, everything would be just like it was then. For those folks writing Cocoa or Carbon, and compiling using the new Apple IDE, Xcode (Version 2 had shipped earlier that year), it was a matter of checking a box at compile time, and a Universal Binary would be created. There was a little work to do if you were using those tools, granted, but the path was clear.

If you were still compiling with Metroworks, though,

“Those of you’ve been through a Carbon Port, or a Mac OS X kind of port, this was nothing like that.” – Theo Gray, Mathematica.

He famously said that they changed less than 20 lines of code in all of Mathematica to get this done.

But Apple knew that the path for those Metroworks customers was going to be longer than they wanted, so they took a page from previous transitions and wrote Rosetta, an on-the-fly dynamic binary translation layer that shipped with the operating system and ran the PowerPC binaries on Intel, albeit slightly slower.

It wasn’t enough for Apple to offer developers a path to Xcode 2.1 to help here, via Universal Binaries, it was Rosetta that made this transition fully possible. Moving code across IDEs remains a non-trivial task for any codebase, and Rosetta gave Apple developers what they needed in comfort in order not to rush the transition. Classic apps, which required the presence of the Mac OS 9 embedded systems, would work awhile longer, but soon be abandoned.

At WWDC that year, Apple demonstrated Photoshop, Office, Quicken, and other non-edited apps on Intel running in Rosetta, and working just fine, the average user didn’t have to know or care whether or not they were running natively.

Apple said that the 2006 and 2007 years would be transition years where they sold both PowerPC and Intel chips, and got commitments from Roz Ho of Microsoft and Bruce Chizen of Adobe on stage at WWDC to getting things ported to Intel and Xcode.

The commitments were made, and the transition was a huge success for Apple and Developers alike.

Mac OS X to OS X to macOS (2006 – 2018)

Beginning with Mac OS X 10.2 – Jaguar – Apple moved to an annual release cycle for the new operating systems.

In all of this, there have been some challenges, though, including a bit of a middle finger from Apple to developers related to the Carbon API. Apple kept promising that Carbon would be a great way to develop apps. It was, until 64-bit apps came along. Carbon never got 64-bit APIs, and Apple kept promising to devs that they’d eventually come. Until they pulled the rug out, and said that if you wanted to move to fully 64-bit apps, you had to migrate to Objective-C and Cocoa.

That message was particularly frustrating to developers when it came.

10.7 brings Lion, and since its arrival, every year since has featured a new operating system.

Lion indeed marked the first mostly-online delivery system, with download coming from the App Store in the form of a monolithic application installer that handled the changeover. No longer did you have to boot from a CD or DVD with the operating system to install it. Lion would also be sold on a small USB Key for those places that required physical media for offline setup.

Every year, major changes. New APIs, new features for management, new challenges.

From Intel to Apple Silicon (2020)

In the summer 2020, Apple announced a transition from Intel chips to their own chips at the heart of the Mac. For more than a decade, Apple had been making their own processors for the iOS family of devices. Apple’s acquisition of P.A. Semi in 2008 laid the groundwork.

Much like the translation to Intel, Apple announced two major programs as part of the transition: Universal 2 Binaries made by Xcode contain binaries for both processor families. In addition, Rosetta 2 will translate existing Mac apps at install time, as well as handling JIT compilers.

Unlike Rosetta, though, it wasn’t installed by default and would be installed at the time it was required, or via command line hook. This change drove a lot of admins a little bit nuts, because the framework just wasn’t that large.

40 Years of Mac System Software

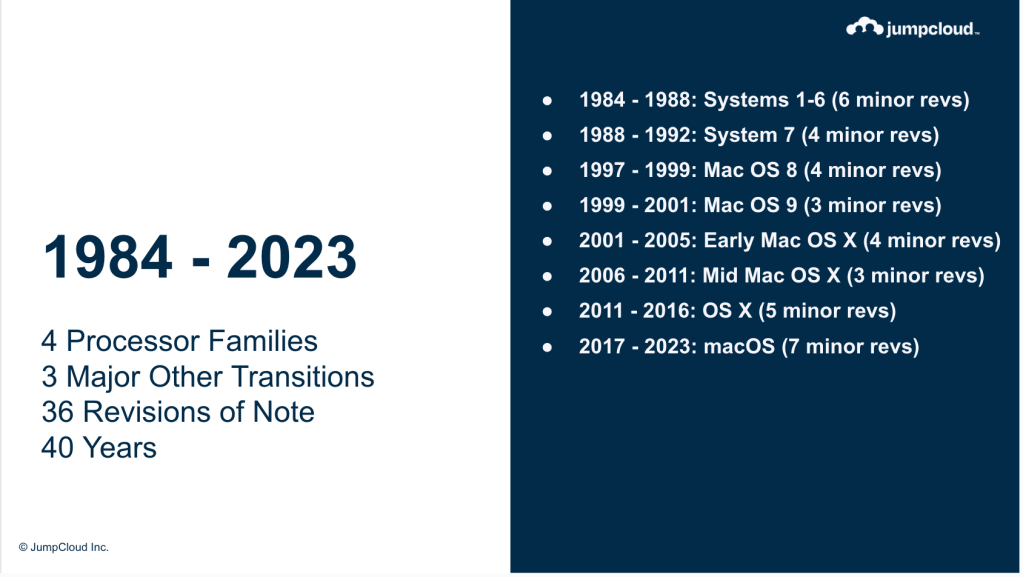

At the end of all this we have traveled just about 40 years in time, and we’ve passed through 36 versions of the operating system, 4 processor families and 3 transitions between them, as well as 3 other transitions of note, and 36 notable releases of the operating system that underpins it all in 40 years.

The Apple Cycle, Today

As we look at the cycle we’re in now, there’s a pattern that has taken shape and held for a long period. Here’s how it goes:

The Phases

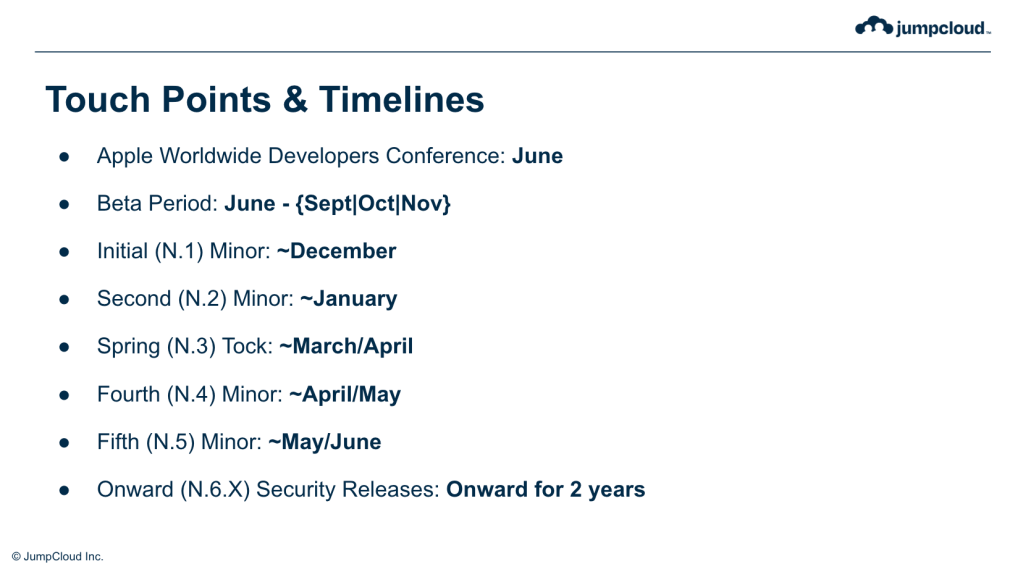

Worldwide Developers Conference remains in early June each year, and Apple releases a new version of the operating system to developers and systems admins alike. These betas contain new APIs and technologies, new MDM profiles and enrollment methods, new versions of Swift, new frameworks, all manner of new and hopefully improved versions of things that represent our livelihoods.

There’s a few months where we get to kick the tires and experiment with new things, report back how things are going, and then prepare for the wide rollout of the new technologies. Early testing favors the prepared, and deep exploration of the state of play is rewarded with knowledge.

In many ways, this is the gold rush time, when experimentation and feedback are rewarded with nuggets of improvements and possible reform. A major campaign from Mac Admins in 2022 helped ensure that the new Login Items profile for MDM was ready ahead of the release of macOS Ventura in the fall. This new behavior in the OS was met with a cascade of admins explaining a strong need to customize the experience of our colleagues in business and provide for them a curated experience.

Indeed, many such changes have been the result of feedback from the community writ large. While it often feels as if Feedback and Radars are not met with immediate change and capitulation to the whims of our learned colleagues, it’s clear that Apple has spent substantial efforts in their Enterprise Workflows team in listening to the needs of Apple Admins throughout the world. The channel #appleseed-private on the Mac Admins Slack remains a strong source of inspiration for a world with a great deal more cooperation than in previous times.

At the conclusion of the beta period, if you haven’t gotten the changes you’re looking for, you’re almost certainly in for a wait of about six months. The way the release structures tend to go at Apple works out to some subphases we can cover here.

The Fall .0 release is the A build cycle, most often. Occasionally – as in Big Sur – the A release is meant for new hardware and the primary release, as with 11.0.1 is given the B Branch. Many release cycles don’t see a B Branch, or a very stubby one. The .1 or “bug fix” cycle often carries C builds, and often contain 1 or 2 things that get bumped outward from the A release in the Fall, and arrive in the middle of December ahead of the code freeze ahead of the Christmas holiday.

The January .2 release tend to be security fixes and bug fixes alike, and last through until the March/April .3 release, which often is the ‘tick tock” release to the initial .0 release, and is focused on completing the feature story. The D branch is often also short-lived to get to the E, F and eventually G branch, which tends to be the “long term support” set of security releases which carry through to June, when the cycle begins anew.

Older versions of macOS enter a stability-and-security phase upon the announcement of the next operating system, which means they get security updates for approximately 20 months, after which they are all but abandoned.

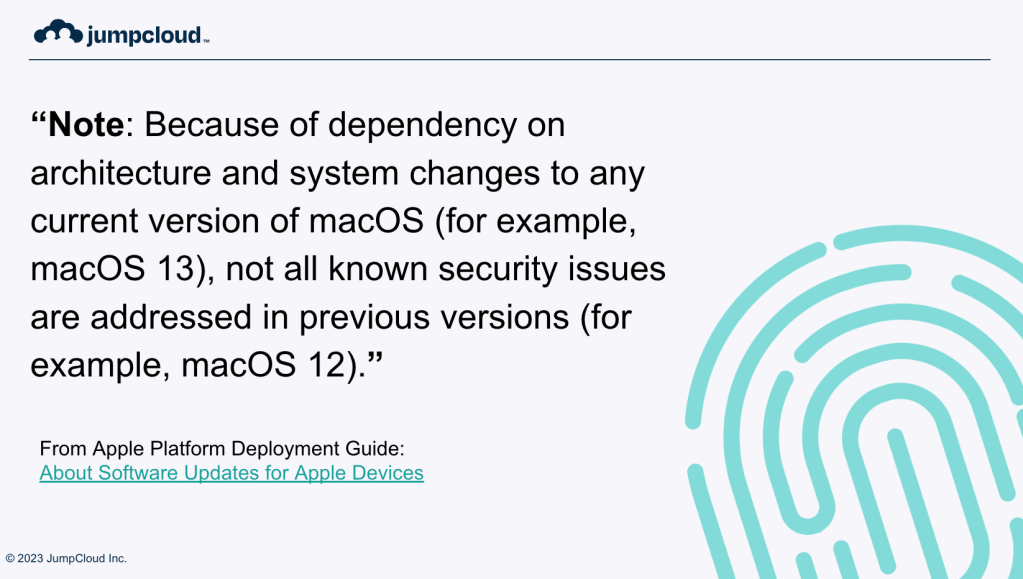

In addition, beginning with macOS Ventura, Apple has publicly commented that only the most recent major release versions see the full patching of security holes. Specifically, they state:

Note: Because of dependency on architecture and system changes to any current version of macOS (for example, macOS 13), not all known security issues are addressed in previous versions (for example, macOS 12).

This largely means that not every security bug that is resolved in the latest major release of macOS is backported to previous versions, which means older versions of macOS may remain vulnerable to security flaws that are fixed in subsequent versions. This may come as a shock to some, but the trendline has been there for years if you review Apple’s security documentation for each release of the operating system.

For the last three years, since macOS 11 Big Sur, Apple has thankfully deprecated the old build-focused supplemental security updates, which didn’t change the version information, but did update the build number. The iOS style of version numbering where the number always increments was a welcome change for which I was thankful.

Writing Great Feedback Takes Practice

Knowing that feedback cycles are super important is one thing, acting on it is entirely another. The amount of feedback that Apple receives is likely prodigious, they don’t release numbers, sadly.

While it’s not clear to me if every individual FB# is used by the system, it’s certainly within the realm of possibility that Apple is receiving hundreds of thousands or even a million of these requests in a year. Making yours memorable, and making it count, can feel a bit like hoping for a needle in a haystack.

With that said, providing an appropriate amount of detail, as well as information from affected environments is a good way to make sure that you can feel good about making your case.

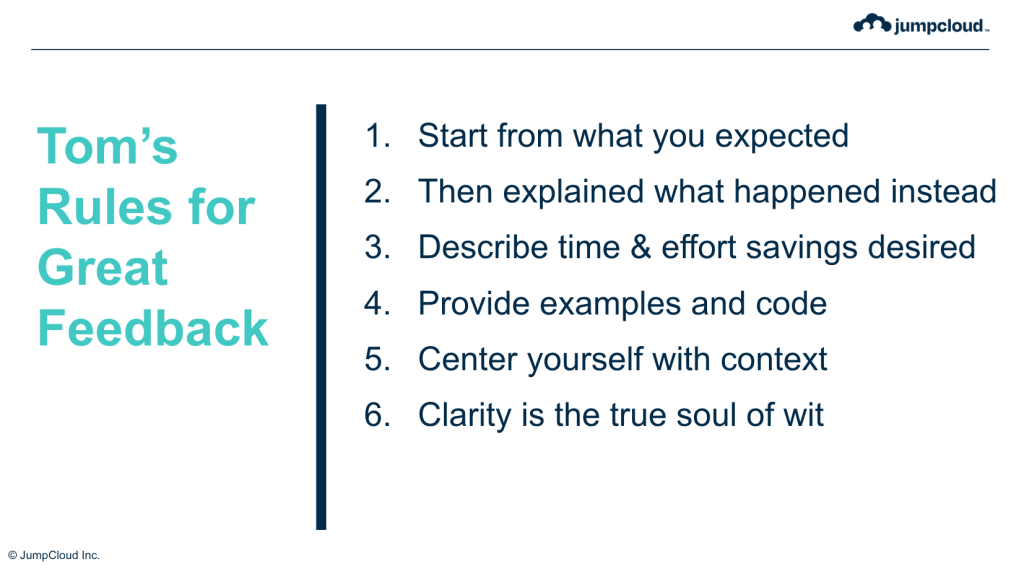

Here are my rules for writing good feedback:

None of this is a guarantee that your wish will be their command, but all of it is a way to think about how you communicate with the powers that be. Well written feedback can and should be shared with other admins and developers. Which takes me to…

So Does Lobbying For Your Needs as a Developer and an Administrator

Getting other people to see your plight and share in it may help get your problem fixed faster – or at least help those at Apple who prioritize such things understand the shape of the problem better – and there are some welcome side effects such as community building that may well happen.

Chances are, if you need something from Apple, you’re not the only one. One need only look at the success of the community at getting a new payload for Login Items was the result of a concerted effort to explain the need, why it was a demonstrable improvement for end users, most importantly, and why it was compatible with the security environment.

Cycles of Change and Learning

Once you know you’re in a cycle, you can spot the patterns. Spotting when patterns change, and choosing to remain zen amidst that change represents a major improvement in the quality of your life and your workstream.

We’ve talked about the cycle of Apple events, and though it’s not written on tablets in the center of Apple Park – at least, I don’t think it is – there’s a framework for operating system releases here that is manageable and understandable. These patterns don’t just represent the cycle of events, they also represent a cycle of change and a cycle of learning.

We get new features, so we have to learn how they work. We get new APIs, or new versions of Swift, and so we must learn how they work. New features drop, new hardware sometimes arrives, and so we get practice in patience and engagement. Being ready for the tiny beta cycles of a .2.x release, or the big bang beta cycles in the June and July timeframes, means having a cycle of change and learning in mind.

Some organizations go deep on change management, applying ITIL processes to all operating system updates, or even just configuration changes within your environment. These processes are structured around accountability and engagement with stakeholders, and a bunch of other six sigma slash lean methodologies that often require an MBA to understand, or at least adapt to. They can be ossifications for an organization, but they also come with them some understanding that change is hard, and doing it right means preparing your organization for change, crafting a plan for change, making the change, and studying its results.

In fact, those are the main phases of any change cycle at all – you need to know what’s going to need to change, prepare all the right people for the change that’s coming, whether that’s your customers or your coworkers, and then delivering a plan of action and vision that drives your team forward. It silly to do the whole thing for a one-line configuration profile change, but having a good way to think about all the different update kinds and types let you figure out what gets to be a passing note in a company slack channel vs what goes into an all-hands email or meeting topic.

Having a rubric for the shape of change inside your organization is all about making the change cycle one that is transparent, engaged, and accountable for your teams. Whether that’s a customer-facing change that deprecates an old API, or a new policy, or even just a relaxation of an old posture that no longer requires a depth of control, you as the admin or developer are in charge of your customers’ understanding of that change.

Building centers of knowledge and rich information within your organization about the work that it is doing is a huge part of the work that’s required to get the necessary buy-in for change that most of these events require. Whether that’s updating to a new OS, or deploying or sunsetting a tool, or even building out new features of your applications, a strong communications strategy is a psychological benefit. Transitions are challenging. Just look at the four major Apple transitions that we discussed today. Each one came with a communications plan on what the change would be, what the world needed to do to adjust, and when it needed to do that by. Your organization can benefit from publishing its own changelog for management, for software functionality, and you can take away the uncertainty that can plague a change like this.

If you know you’re in a cycle, you can do the following things to give yourself a better understanding of where you are in the change that’s required because of it:

You’re not going to get everything done in a single cycle. There are some items that have to stay on the backlog because, and I want to stress this point entirely: you deserve not to be worked to the bone to accomplish impossible timelines.

There are priorities at play in all of this, and you need to be prepared to pick which things are possible in the current timeframes. Rewriting everything is often an impossibly expensive option that isn’t feasible.

Coping with the Impossibility of Soothsaying

There are a number of people who know what the shape of the future for Mac will be. I don’t think any of them are in this room right now. Without a public roadmap – or the likelihood of one’s sudden appearance in our lives – we’re left gathering information in an information poor environment. Everyone’s cousin’s friend’s mom’s totally works at Nintendo, I mean Apple, and knows exactly what this massively secretive company is totally working on, so you know that Super Mario Bros 4: Mario Does Merengue is due out any day now.

There is an impossibility of supply chain rumors, leaks, threats, and bread crumbs and tea leaves to be read as an admin and a dev. Few of them pan out exactly as the stories make them out, and indeed, the facts are often right, but the context sorely missing from the rumor. The noise isn’t worth the tiny bit of signal that they might generate.

There are some changes you cannot un-make, either because they move key data around, or because they do things in ways you cannot undo, but making permanent decisions – truly, one-way door decisions – in a low information environment is the kind of mistake that can cost you in new and awful ways.

The impossibility of uncertainty, especially in the low-trust, high-noise environment is a reminder to all of us that making decisions in an information vacuum is a high-risk activity.

Which takes us to probably my most important point.

Making Choices in IT and Development

Making choices in IT and Development comes with costs of time and effort. Indeed, the work that we do can never be all done, There are always new APIs, new methods, new features, new ideas, and they’re all competing against each other and your resource pool.

We’ve talked about Gold Rush periods, periods of intense study and execution, where there’s heavy competition for the same resources and land. The summer beta period feels like this. There’s a lot to learn, quickly, to get things ready for a new operating system version. Sometimes your stuff is straight up broken and there’s triage to do to figure out if it’s you or if it’s Apple that is busted up.

Getting started as early as possible with reviewing what’s new and how your product and processes work with the new operating system, or new APIs is going to give you the best head start.

When it comes to building your experience, or build software, or even just supporting an existing environment, you have to be ready for new versions of the operating system for the simple fact that Apple continues to build new hardware that you will want to staff your team with, or support your customers on, and you can’t go backward in time. You will have to support these new OSes if not on release, after 90 days, when you can no longer block updates in a sane and feasible manner.

Breaking down your roadmap means following a set of clear steps, as you look at the future:

Once you have a roadmap that’s underway, you might be constantly re-evaluating your choices. That’s normal and natural. Market conditions can change.

You might find yourself in a place where you suddenly need to make rafts not boats. One product manager I respect has an article up where he talks about what the needs of businesses are this year, and while I think his predictions are certainly dire, I don’t think they are fundamentally untrue. We are in market correction, we are in a place where startups need to succeed more rapidly, or at least offer stability in the face of challenges.

Conclusion – Centering Empathy in Your Practice – Including Yourself

I need us to go back to the beginning of this talk for a minute.

A couple of weeks ago, I was talking with my friend Arek Dreyer about the relentlessness of the work that we do – indeed, product folks are in the same cycle as everyone else here – and I mentioned that sometimes it felt like I was sometimes sprinting down the street after a cabbage truck. It can feel at times like you’re running after a crappy reward. Now, I love a good cabbage dish – roasted cabbage hearts, halushki, even a great cole slaw is delicious – but not one of them are worth putting in a monster effort all the time.

I spent a lot of time watching old Apple Keynotes to prepare for this talk, including the Intel announcement keynote in summer of 2005, and the announcement of Rhapsody in 1998. Both of those keynotes lay out the incredible possibilities that those changes represented. They catered, at their core, to the desire of every engineer and IT professional to make a difference with what they do.

I know that folks I know at Apple work incredibly hard on what they’re doing, whether it’s keeping the routing system going for Apple Maps, enhancing Siri with new intents and knowledge bases, or helping to communicate the needs of Enterprise to developer teams, or working to communicate the goals of the platform to new customers.

One place I’ve struggled the last few years is that the announcements feel forced, and they just represent a bolus of work, and not an increment of wonder or joy.

Because I’ve got a 9 year old who was 6 when the pandemic started, I spent a lot of time watching Encanto with our family. The story is one of gifts, of skills, of work, of stress, of generational trauma, and it has an absolutely spectacular song that hit me like a ton of bricks.

Luisa Madrigal, the strong sister, begins to falter as their gifts falter. She isn’t sure of who she is without the ability to hold aloft a whole cart and its horses.

Indeed, the moment where she sings:

“But wait, if I could shake the crushing weight of expectations

Would that free some room up for joy

Or relaxation, or simple pleasure?”

It just felt like my own resolve came crashing down. Was it that I was finding less joy in my work, or less joy in the products we support? Or was it that they just came to represent the crushing weight of expectations – of understanding the products, of understanding their edges and their challenges, of the whole adventure related to deploying them, or keeping them going, or just… all of it all at once.

Of late, I’ve tried to build a separation in my work brain between the consequences of an announcement and the announcement itself. Trying to make space to remind myself of the joy of the engineering of a product like my AirPods Pro, or the M2 Ultra processor, or even the new OTA Software Update mechanism.

The work is the work, and it shall ever be the work. There is room for joy, for relaxation, for simple pleasures amid the work. It does not have to be a joyless slog through documentation, code and change. In fact, the gold rush can be just as much about the excitement of discovery, the anticipation of new toys, and the thrill of the solution as much as it is a lot of testing, a lot of experimentation, and a lot of writing new documentation.

These cycles bring us goods and bads, they bring us challenges and opportunities. How we choose to approach them can be the difference between a long slow jog after a cabbage truck, or it can be the joyful sprint after the ice cream truck, the taste of that Klondike bar driving you to catch Mr Whippy before he takes off to another neighborhood.

It can, indeed, be both of those things at once. We contain multitudes.

Walt Whitman wrote:

The past and present wilt—I have fill’d them, emptied them.

And proceed to fill my next fold of the future.

Listener up there! what have you to confide to me?

Look in my face while I snuff the sidle of evening,

(Talk honestly, no one else hears you, and I stay only a minute longer.)

Do I contradict myself?

Very well then I contradict myself,

(I am large, I contain multitudes.)

I concentrate toward them that are nigh, I wait on the door-slab.

Who has done his day’s work? who will soonest be through with his supper?

Who wishes to walk with me?

Will you speak before I am gone? will you prove already too late?

Now. Who wants some ice cream?