About a year ago, as my car crossed the 75,000-mile mark, I started to think it might be time for a new car. My Ford Fusion Hybrid, bought the summer before Charlie was born, has been a real performer, but the edges were definitely starting to show. Fuel economy has been a bit on the decline, the lack of good car interface is showing, and, frankly, I was feeling like it was time.

My search was, for a long time, pretty casual. I started looking at car news sites as new models came out, and I had a Notes file going for which cars I liked and why. At the same time, I setup a Digit savings goal (Disclaimer: we both get $5 if you sign up with that link) to help me set aside money for a down payment.

There were setbacks and fits-and-starts as I had to reprioritize other monetary priorities, but over the winter I hit a high enough target to make a car payment affordable again. That’s when things got real. I started an AirTable to keep track of my different options.

Comparing two options is pretty easy. You start with a Pro/Con list and work it out based on a weighting of options. When you start with a larger field, the decision-making matrix gets a lot more complicated. That got me to thinking about the way we make product decisions, generally, in IT operations. We frequently help organizations compare MDMs from a set group, compare and contrast features, and help the client weight the choices accordingly.

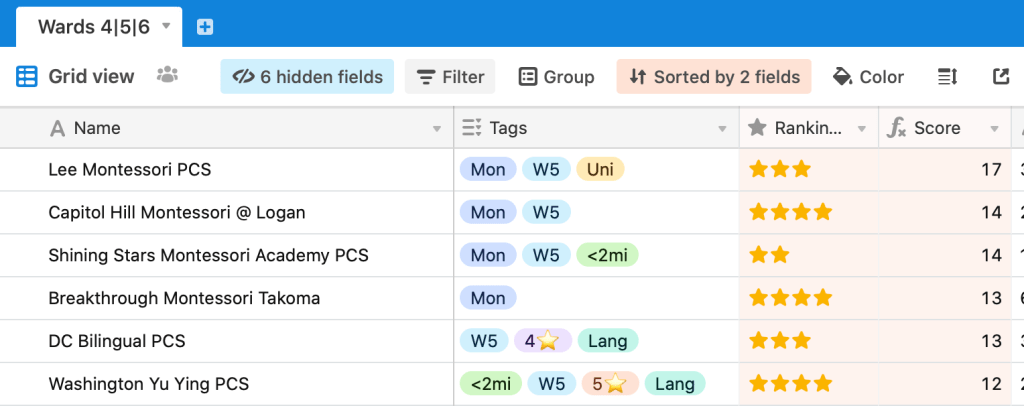

When faced with a similar situation, my wife and I used AirTable to make a weighting system for prospective schools for Charlie. AirTable is highly flexible, and allowed us to easily build a system for understanding our options.

We specified tags and star rankings, and used that, along with distance ratings, created a scoring system. Montessori schools added points, same as schools in our part of town, and our likelihood of getting in.

We ended up with this formula:

IF(1/Distance > 1, 3, 1)+{⭐️}+IF(Montessori = "Yes", 4, 0) + IF(Uni = "Yes", 0, 1) + IF(Ward = "Ward 5", 2, 0) + IF(Ward = "Ward 4", 1, 0) + {Waitlist Score}It gave us a guide for handling a complex decision. Sure, it doesn’t beat going with your gut. At the end of the process, we didn’t just go in score order, because there was no adjustment in our formula for intangibles. That changed with the car search this time around.

The Looking Phase

You can compare cars, and rank their cost, speed, power, fuel economy, cargo space, passenger load, wheel size and more, but that’s only part of the process. There are subjective factors, like how it corners, what it feels like to sit in the seats, and how comfortable your passengers might feel, and that gives you extra dynamics. The same is true of MDMs, Cloud Directories, Solutions Providers, and more. Having room in your own rubrics for scoring for the intangibles like design UX, code extensibility, and more, will give you a better feel for what it all comes down to.

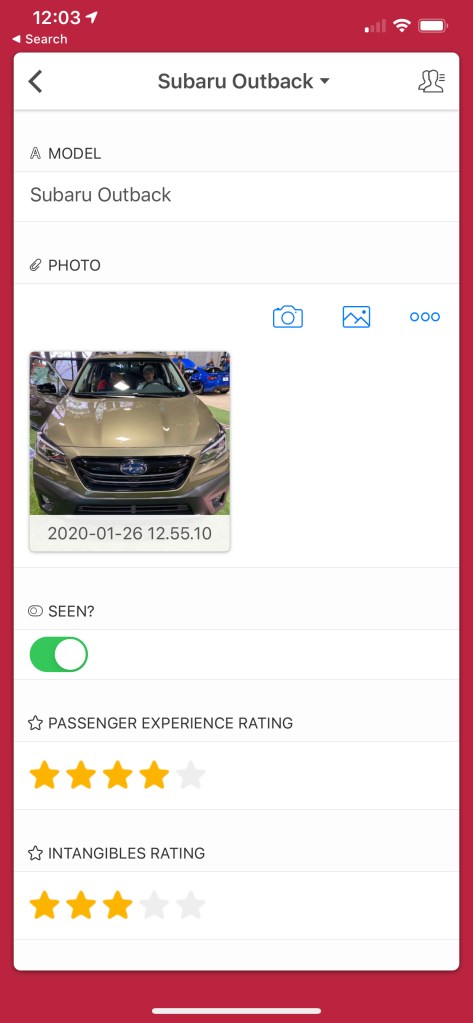

When it came to the search, I didn’t know what I didn’t know, so I went to the Washington Auto Show at the DC Convention Center in early January. I took my best tester, Charlie, and we went and sat in just about every compact crossover from Toyota and Hyundai up to Alfa Romeo and Jaguar. I had made an entry form to rank a bunch of key stats, and a picture field to help remind me of a car’s aesthetic features.

I knew I wanted to see the Subarus, but on my way through to them, I encountered the new RAV4 and Highlander from Toyota. I enjoyed their aesthetics enough to put them on the test drive. Though they ended up outside of my price range, I was impressed with the build quality and cabin construction of the Buick Enclave models they had on-site. I had no idea they were so nice! I never thought much of the Buick badge as a whole, but their product was quite nice. Charlie thought they were his favorite of the day.

Sitting in an Alfa Romeo Stelvio will always remind me of the week I spent with one in the Alps this winter, so that was a bit of a sentimental, if completely impractical (they’re sold and serviced at Maserati dealers! Come on!) entry on the list. The Jaguar E-PACE was a dizzying build of practically every little option on their website, but the interior was quite luxe and comfortable. Onto the list it went.

Sadly, Audi, BMW, Mercedes and Mini Cooper all passed on this year’s auto show. While I liked the look of the BMW X1 and X2, I couldn’t get over how terribly rated their console system is. I couldn’t drive a Mercedes and feel good about myself. Mini Cooper sounds more fun than its practical reality. But Audi gave me some thoughts, so I ended up seeking out an Audi dealer to try their new Q3 SUV.

Several friends recommended I check out Hyundais as well. The Auto Show exhibit was great for sitting in just about every version of the Hyundai lineup, from the Kona to the Palisade. I was surprised, though, that you had to go all the way to the top of the line before leather seats made an appearance. I never quite felt at home in the interiors, sadly, because their pricing was quite attractive.

Evaluating Your Formula After The First Round

The Auto Show was a great place to be a car buyer. No sales people, just company reps who can’t sell you anything but the dream. It was a great place to evaluate vehicles without any pressure. I sat in car after car, asked question after question, and didn’t have to deal with a pushy rep who wanted to be elsewhere. This wouldn’t be true once I sat down in a showroom.

I spent the weeks following the Auto Show in heavy research mode, watching videos from various car reviewers (Alex on Autos was a regular, as was Doug DeMuro, the Kelley BlueBook team, and others) and tuning my ratings.

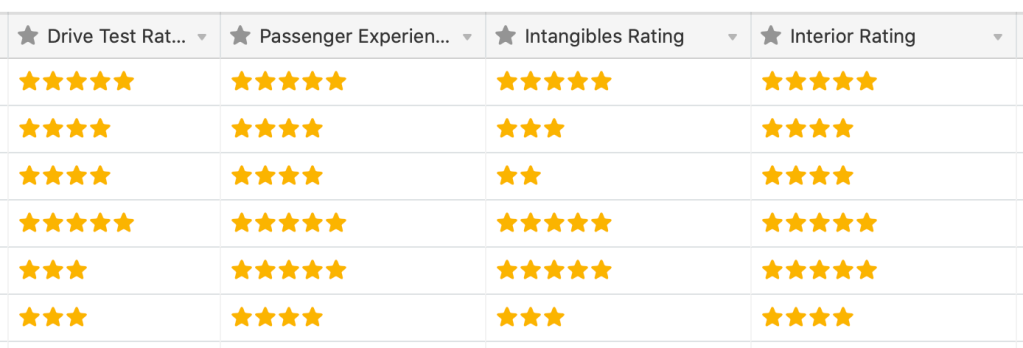

Figuring out what’s working for you and what’s not becomes the challenge after the first round. Initially, I had a few variables I was considering: Passenger Experience, Intangibles, Cargo Space, and Overall Interior. A five-star rating system was an easily-configured option in AirTable, so that’s where I started.

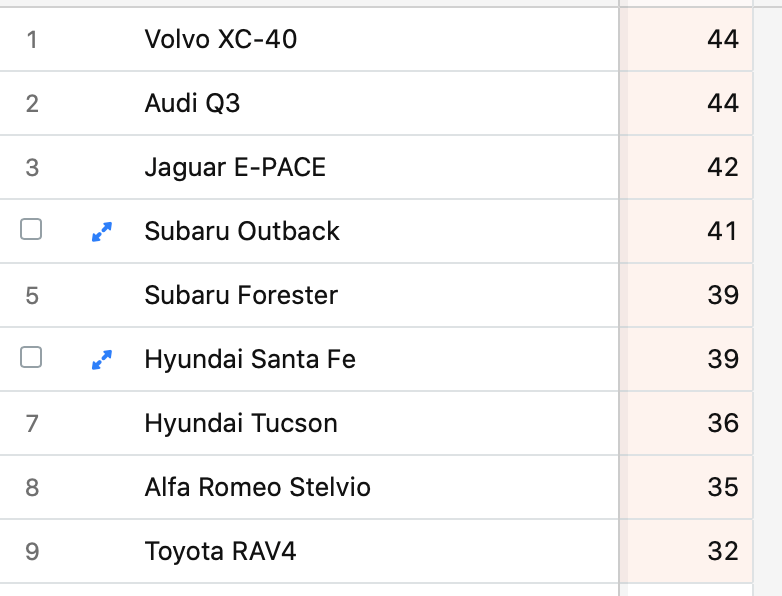

The original version of the scoring formula put the Hyundais on top of the pack due to cargo space and fuel economy. These were two important features, undoubtedly, but I realized that they were floor features. I cared about what the floor was, not what the peak meant above that. I iterated the formula again to give a floor value, and enhanced scoring for features that actually delighted me, instead of just met a bare minimum.

But how do you rate something when you can only look at its features, watch videos, instead of using it yourself? You can’t get the full picture of anything until you click the buttons, or push the pedals. On to the test drive phase.

Test Drives

This is where things get real. You need to be ready for the dealership experience, which is to say, you need to be ready to deal with car salespeople. They are professionally trained to get you to buy something massively expensive the day they first meet you.

This is not an ideal outcome for a number of reasons.

What I did was setup another email box just for dealers, and a Google Voice phone number just for the dealers, and use those as a way of keeping these salespeople at bay. There are good salespeople and bad salespeople. The good ones will respect your time and privacy. The bad ones, well, there’s a reason that car salespeople have a particular reputation.

Since you need to surrender your license to a dealer in order to get a test drive, there’s no point in an alias, so just give up your real name.

I test drove two Hyundais, two Subarus, an Alfa Romeo (well, technically…), an Audi, a Toyota, and a Volvo. Here were my general thoughts, if you are in the market for cars.

Toyota RAV4: Toyota updated the looks on the new RAV4 last year, and it really showed. Toyota caught me entirely by surprise at the Auto Show, and the exterior of the car made me want to test drive it. Unfortunately, this was absolutely the worst car I drove. As much as cars are comprised of a cabin, a driving experience, and a driver experience, Toyota’s changes are entirely superficial. The RAV4 was a total dud. Sluggish off the mark, soft on the steering, and sloppy in the cabin, I kept wanting more from the actual vehicle. Looks great on the outside, but that’s all.

Alfa Romeo Stelvio: When I rented an SUV at the Marseille airport for my trip up into the Alps, imagine my surprise when they handed me the keys to a fairly new Alfa Romeo. Ooh la la! I loved the way the Stelvio handled those Alpine roads, and the smaller diesel engine had plenty of capable power. The infotainment system was a bit of a disappointment, as it wasn’t a touch-sensitive screen. The cargo area was a little bit of frustration, as while we got eight bags in there, the compartment wasn’t straight-forward to work with.

The Subarus – Forester & Outback: I enjoyed the Subaru build quality, and the Boxer engine is a solid driver, but I found myself wanting a hybrid of the two. Either give me an Outback with the moonroof of the Forester, or give me the Forester with the tech stack of the Outback. Each felt like it was missing something.

Hyundai Tucson and Santa Fe: While Hyundai is making a quality product that is comfortable to drive, it’s hard for me to balance the generic cabin experience with the car that it is part of. Zippy engines, interesting technology choices. A cabin that feels like a sanitized doctor’s office.

Audi Q3: This was the car that I almost bought. And maybe if the dealer had told us what it would actually cost to own one, I would’ve, but his sales games were a total turn-off. The tech stack in this car was the best in class, with wireless CarPlay and a really interesting cockpit display that had live traffic data in the gauge cluster, the Q3 was at the top of my list. What it came down to was worse fuel economy and twenty fewer horsepower in the engine. The off-the-block feeling of the Q3 didn’t come anywhere close to matching our final choice.

Volvo XC40: The winner was the XC40 T5 Inscription in Denim Blue. 247 hp under the hood, a stellar infotainment cluster (sadly, no wireless CarPlay to be found yet), a roomy and comfortable cabin, plenty of storage space that was well constructed and laid out, and the best looking exterior of the bunch. Couple that with Volvo’s safety record and the best Pilot Assist system short of a Tesla and you have the car that won my brain and my heart.

So How’d The Scoring System Work?

Here’s the final formula:

{Interior Rating}+{Cargo Space Rating}+2*{Intangibles Rating}+2*{Drive Test Rating}+{Passenger Experience Rating}+IF({Mileage}>25,3,0)+IF({Cargo Space}>23,3,1)+IF({MSRP Price w/ Options}<42000,3,1)+{Car Tech Stack}+IF({Approx Payment}<450,2,1)The beginning of the formula is all about 5-star ratings. A rating of the interior and a rating of the cargo space begin the process. I decided that I actually did care about how the car made me feel, and how I felt about its driving ability, and each of those ratings were doubled in the final score (up from a 1x value in the initial version). If the mileage was good, I wanted that to count for a significant bump, same as the cargo space. I wanted to penalize highly expensive vehicles, same with the financing situation, and lastly, I wanted to include the tech stack’s rating separate from the rest.

And so it came down to the XC40 and the Q3 in the final appraisal. We let both dealers know we wanted a final price, we started the finance paperwork, and set that process in motion. Audi failed pretty spectacularly in the closing of the deal. Our salesperson wouldn’t tell us the cost of a Prestige model or try and find one for us, and it was a huge frustration. Volvo was the opposite. Shawn Hirsch at Volvo Cars Silver Spring was excellent to work with. I never felt any pressure, except around the matter of our trade-in, which was a garbage fire of a process.

What Worked With The Rubric? What Didn’t?

Like any testing scale, there are going to be some intangible items, and some tangible items. You can compare numeric values when they exist, but what happens when they don’t? How do you decide how to weight these factors?

You’ve got to establish your own priorities for the product you are in the market for. If you’re looking at MDMs, and you want a robust REST API, you’re going to need to plan for that and evaluate it based on your use-case criteria. You’re going to want to weight that value based on two factors: how important it is to your use-case, and how good the result is. That’s going to give you what amounts to a vector: a scalar value for how good it is, and a directional value for how closely the product aligns to your needs. It can be the best API in the world, but if it doesn’t allow you to, say, easily remap the owner of a device from the API, you’re going to tank it.

What I found worked and didn’t with the rubric was interesting. I found that I liked all the cars that I drove while I was driving them. Well, except that Toyota. I knew I didn’t like that one almost before we got out of the dealer parking lot. But the rest of them all felt good immediately. I had to revise my ratings over time. An immediate evaluation would often lead to a less critical result because I wanted to like them. I would overlook some things on initial test — again, except for the Toyota, it really was that bad, y’all — that I would come back to in the days following my initial test drive. The important parts of this process can’t be the result of snap judgments during or immediately after your time with the sales folks and the product. You’re going to need to stew on this. I changed ratings multiple times to weight different cabin features and drive experiences. I checked and double-checked statistics.

I made a change toward the end of the process to the scoring system. This is the sort of thing that usually feels like a no-no, right? Like changing the rules after the game has started? It feels wrong to do that. But it’s not wrong at all, in fact, you should absolutely revisit your scoring rubric if you start to be shown patterns that don’t match your experience. What I found out was: all of the Hyundais were scoring the same as the Q3 and the XC40, when my opinions around them were clearly a tier apart.

Why was my formula doing that?

Well, the Hyundais both had more cargo room AND better fuel economy than Volvo and Audi models. They were getting 6 more points, and that was making up for a substantial difference in intangible and cabin ratings. In short, though they weren’t achieving on individual stats, they were making it up due to a single over-sized category boost.

I had to change my rubric to match what the emotional decisions as well as the logical side of the decision. In software, this comes down to how a piece of software looks and operates. Design is how it works, and part of how it works is what it looks like.

I am thrilled to own a new Volvo XC40, and a month after purchase, I’m still as excited as I was on day one. I love the driving experience, the pilot assist is a life-saver in heavy freeway traffic, the stereo and infotainment system are magical, and the cargo space is everything I was looking for.

If you’re interested in a copy of my AirTable, happy to arrange one. Here’s the final version in read-only format.